Learning 3D Compliant Flow Matching Policies

from Force & Demonstration-Guided Simulation Data

* Equal Contribution · University of Pennsylvania

Output

What We Built

This framework uses a lightweight scheme to generate force-informed training data entirely in simulation from a single human demo. With this data, we train point cloud + force attending policies with passive impedance outputs. At execution, our passive impedance controller ensures compliant, safe interactions. Together, these components enable robots to generalize from simple geometry in sim to unseen real-world objects and spatial configurations.

See It In Action

Abstract

While visuomotor policy has made advancements in recent years, contact-rich tasks still remain a challenge. Robotic manipulation tasks that require continuous contact demand explicit handling of compliance and force. However, most visuomotor policies ignore compliance, overlooking the importance of physical interaction with the real world, often leading to excessive contact forces or fragile behavior under uncertainty. Introducing force information into vision-based imitation learning could help improve awareness of contacts, but could also require a lot of data to perform well. One remedy for data scarcity is to generate data in simulation, yet computationally taxing processes are required to generate data good enough not to suffer from the Sim2Real gap. In this work, we introduce a framework for generating force-informed data in simulation, instantiated by a single human demonstration, and show how coupling with a compliant policy improves the performance of a visuomotor policy learned from synthetic data. We validate our approach on real-robot tasks, including non-prehensile block flipping and a bi-manual object moving, where the learned policy exhibits reliable contact maintenance and adaptation to novel conditions.

Framework Pipeline

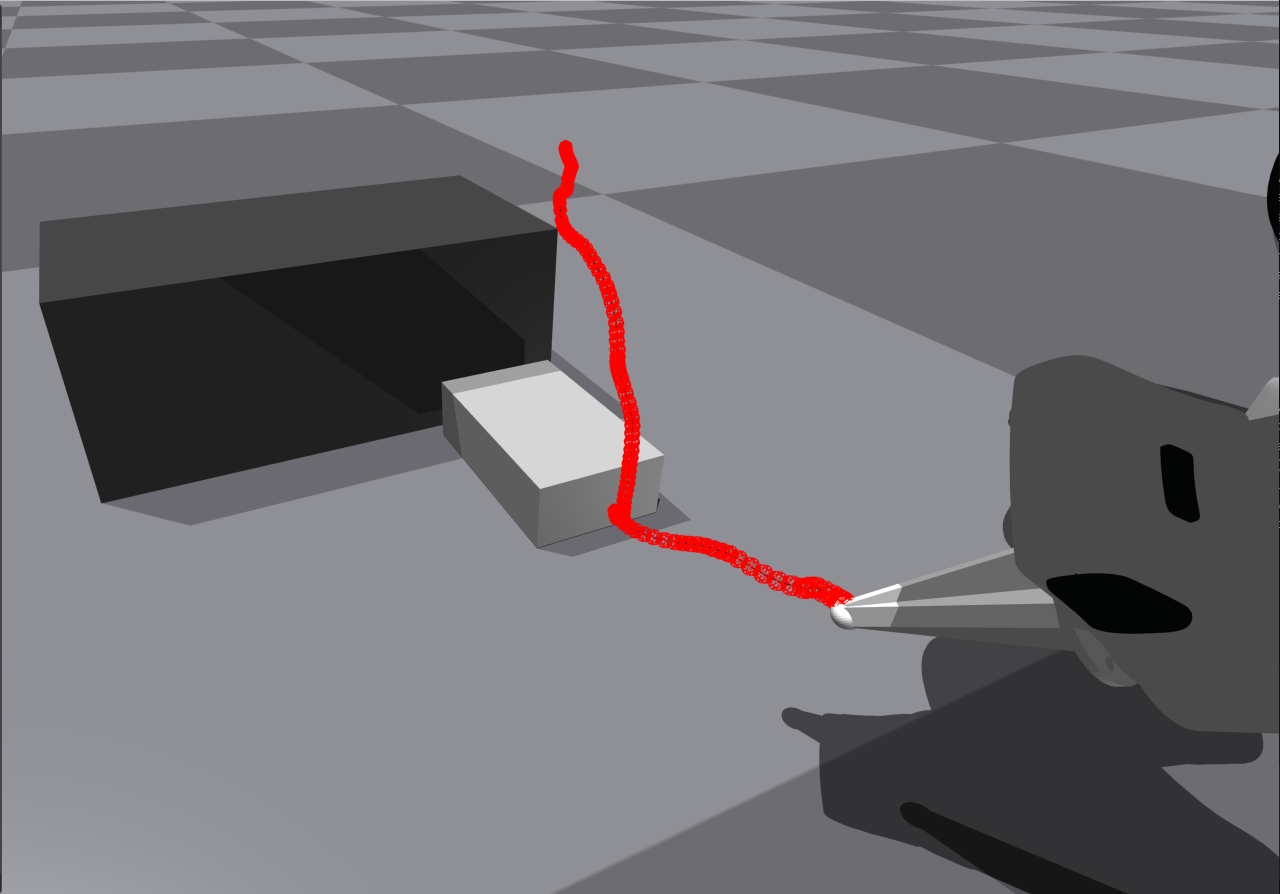

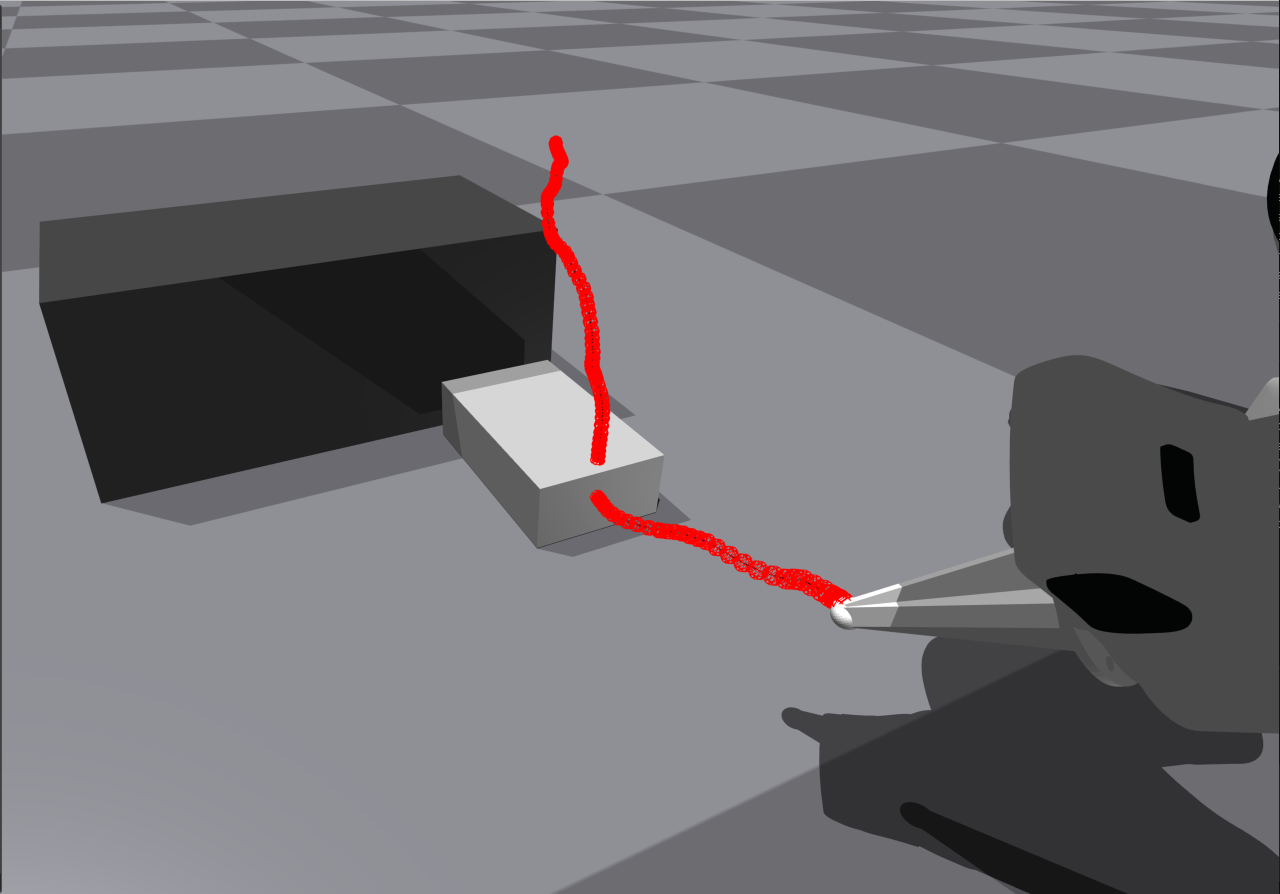

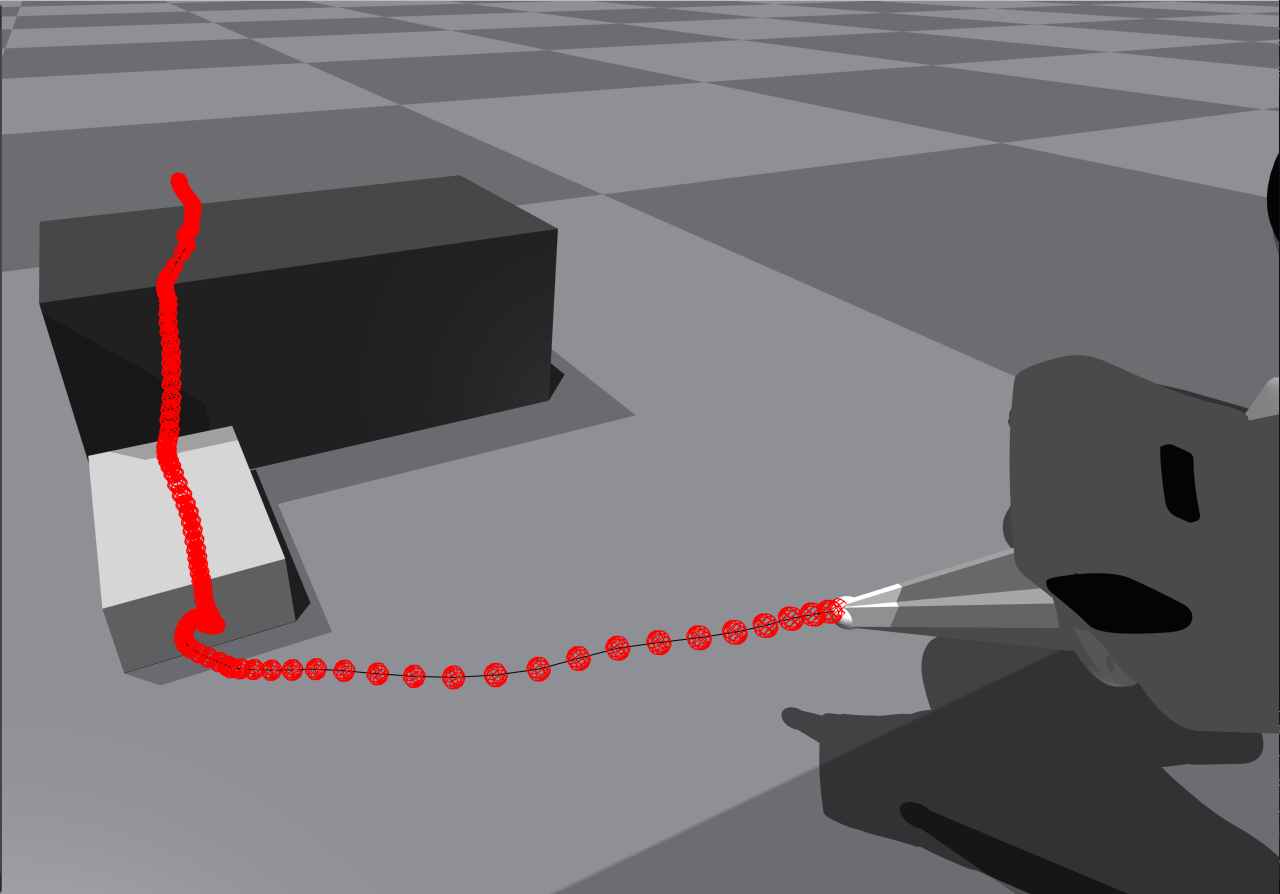

Trajectory Modulation Strategies

From a single demonstration, we generate diverse training data by combining Laplacian editing with force-informed virtual targets — no additional human demos or training needed. Each strategy adds a different axis of variation entirely in simulation.

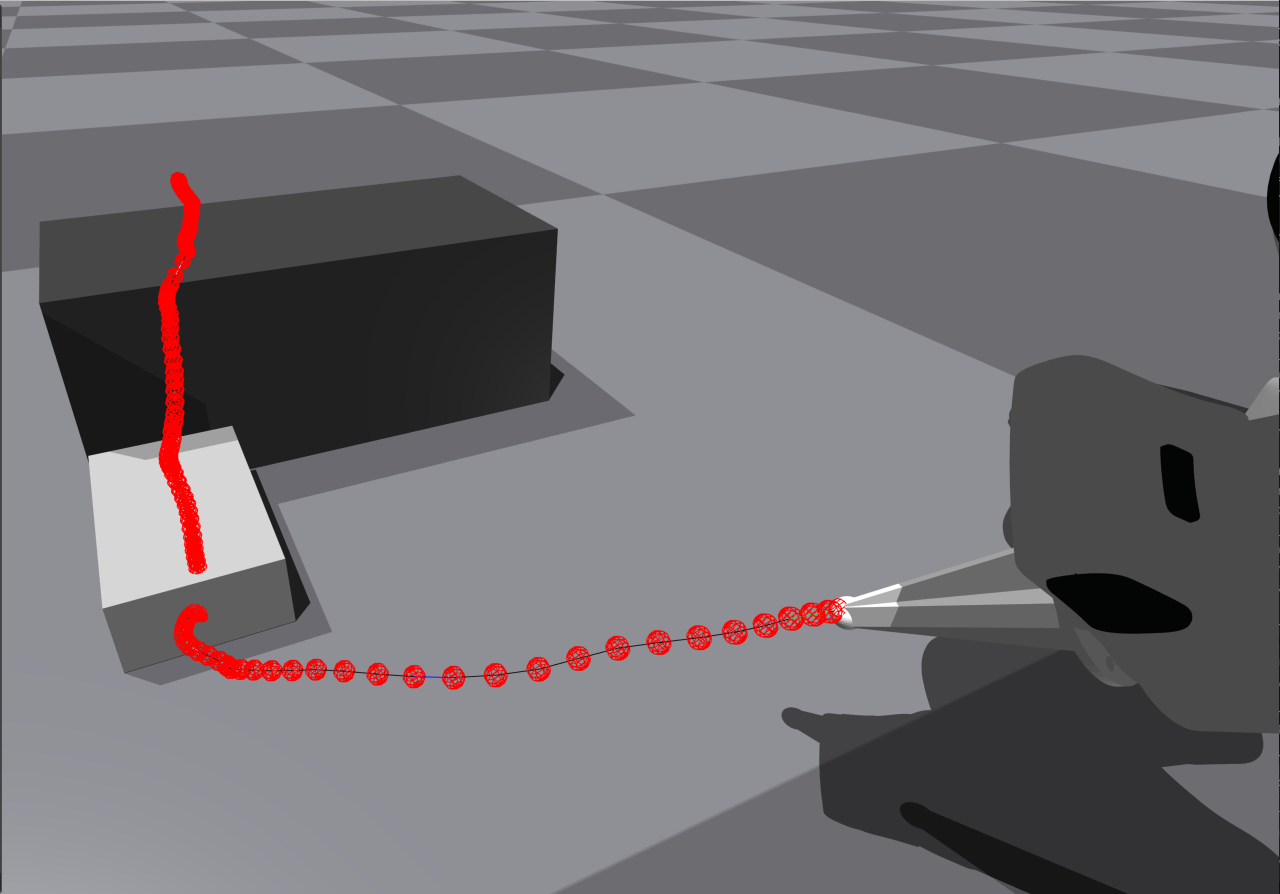

Non-Prehensile Block Flipping

Trained on a single simple-geometry demo in simulation. Evaluated zero-shot on unseen real objects and spatial configurations.

Six real-world objects with varied shapes, masses, and surface properties — each flipped with the same policy.

The same policy handling varied initial positions and orientations across the workspace.

Bi-Manual Object Moving

Two arms coordinate through contact forces to transport objects — generalizing across object geometry and start/goal layouts.

Different object shapes moved bi-manually with compliant contact maintenance throughout.

Varied start and goal configurations across the table surface.

Human Intervention & Stacked Objects

Testing robustness beyond the evaluation set — the policy handles mid-execution human interference and attempts to flip stacked objects it was never trained on.

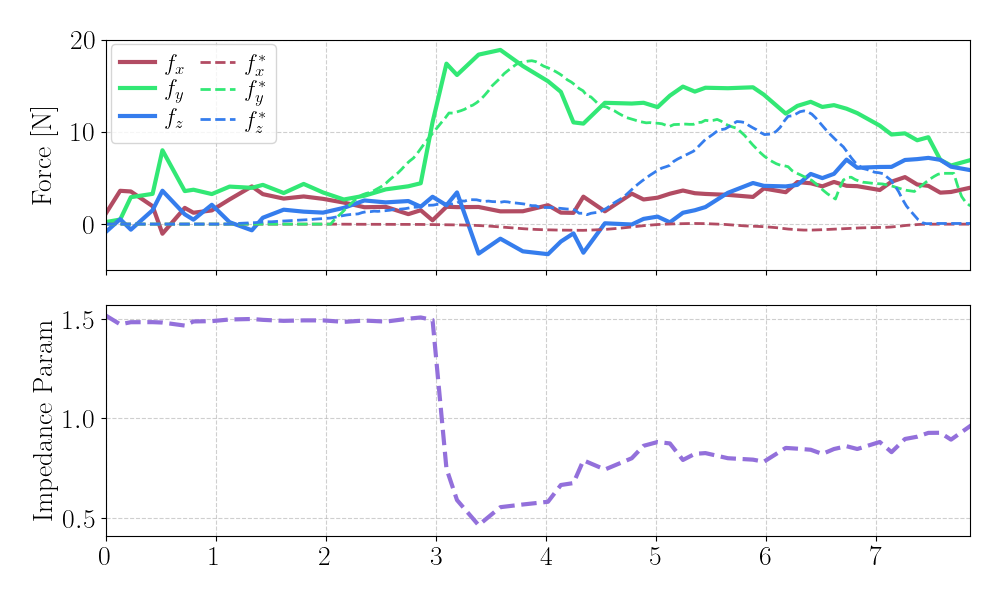

Learned Impedance Behavior

Force and impedance profiles from Block Flipping. As the contact force builds along the y-axis during the flip, the learned compliance gain increases in response. The policy becomes softer to maintain safe, continuous contact without explicit rules.

We thank our anonymous reviewers, who provided thorough and fair feedback that improved the quality of our paper. This work was supported by the National Science Foundation (NSF) Foundational Research in Robotics (FRR) program under NSF CAREER Award Grant No. FRR-2443721.

BibTeX

@article{li2025flow,

title={Flow with the Force Field: Learning 3D Compliant Flow Matching Policies from Force and Demonstration-Guided Simulation Data},

author={Li, Tianyu and Li, Yihan and Zhang, Zizhe and Figueroa, Nadia},

journal={arXiv preprint arXiv:2510.02738},

year={2025}

}